The University of Oslo's case for peer review in large-enrollment courses

The University of Oslo is Norway's oldest and most research-intensive public university. Its Department of Informatics serves hundreds of first-year students each year across six study programs, with a strong emphasis on active learning, formative assessment, and student-centered pedagogy.

Omid Mirmotahari

Associate Professor, Department of Informatics, University of Oslo

Omid Mirmotahari is an associate professor at the University of Oslo with a research focus on assessment, feedback literacy, and first-year student learning in computer science education. He has published extensively on peer and self-assessment in higher education and has presented his work at international education conferences.

Yngvar Berg

Professor, Department of Informatics, University of Oslo

Yngvar Berg is a professor at the Department of Informatics with expertise in computer architecture, digital systems, and engineering education. Together, Mirmotahari and Berg designed and led the controlled pilot study that forms the basis of this case study.

The University of Oslo is Norway's oldest and most research-intensive public university. Its Department of Informatics serves hundreds of first-year students each year across six study programs, with a strong emphasis on active learning, formative assessment, and student-centered pedagogy.

Omid Mirmotahari

Associate Professor, Department of Informatics, University of Oslo

Omid Mirmotahari is an associate professor at the University of Oslo with a research focus on assessment, feedback literacy, and first-year student learning in computer science education. He has published extensively on peer and self-assessment in higher education and has presented his work at international education conferences.

Yngvar Berg

Professor, Department of Informatics, University of Oslo

Yngvar Berg is a professor at the Department of Informatics with expertise in computer architecture, digital systems, and engineering education. Together, Mirmotahari and Berg designed and led the controlled pilot study that forms the basis of this case study.

Context

Based on the CRAFT project (Collaborative Review and Feedback Technology), this case study explores how the University of Oslo's Department of Informatics used FeedbackFruits Peer Review to redesign formative feedback in a 700+ student introductory course, demonstrating through a rigorously controlled pilot that structured peer assessment can outperform traditional TA feedback, without increasing the team's workload. Full findings, including the white-paper report and the peer-reviewed conference paper (Best Paper Award, Udit 2024), are available on the project page.

Assessment of learning outcomes

THE CHALLENGE

Scaling feedback without scaling cost

IN1020 (Introduction to Computer Science) is one of Oslo's largest foundational courses, enrolling around 550 students per year. The course is built around a draft-to-revision cycle. Students submit a first draft, receive feedback, then revise and submit a final version. That cycle only works if the feedback is timely and useful.

As the cohort grew, the cracks became harder to ignore:

What was going wrong as the cohort grew

- Feedback quality varied significantly between the 18 different teaching assistants.

- Turnaround times became harder to guarantee as student numbers increased.

- Coordination overhead for the teaching team grew every year.

- The model did not scale. More students simply meant more staff, indefinitely.

Before making any changes, the team established clear research questions. They wanted hard data, not assumptions.

The questions they set out to answer

- Can peer assessment work as a genuine alternative to TA feedback, and not just a cheaper one?

- Which feedback type drives the most improvement from first draft to final submission?

- Do those benefits carry through to the final exam, weeks later?

THE PILOT, FALL 2023

Three groups. One course. One semester.

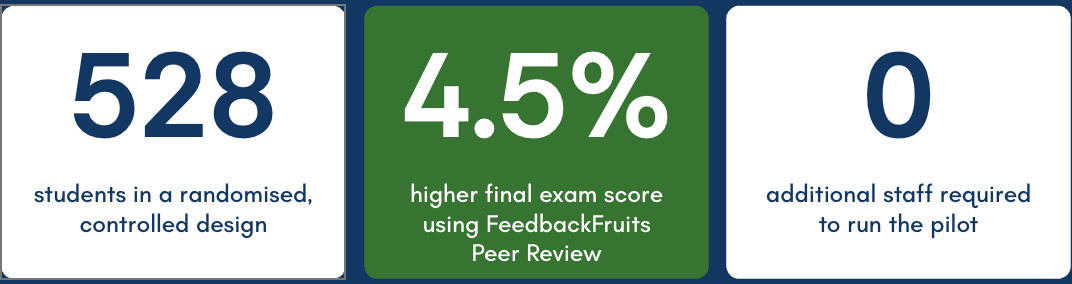

528 students in IN1020 were randomly assigned to one of three feedback conditions. The assignment, deadlines, and grading criteria were identical across all groups. Only the type of feedback changed. Random assignment ensured all three groups started from the same ability level, confirmed by nearly identical grade distributions on the first draft.

Group 1 — Peer Assessment with FeedbackFruits (172 students)

- Tool: FeedbackFruits Peer Review in Canvas

- Task: Each student reviewed three peers with open-ended feedback using a structured rubric

- Scaffolding: Minimal, by design, to test the raw value of peer exposure

- Allocation: Automatically distributed by FeedbackFruits

Group 2 — Self-Assessment with FeedbackFruits (183 students)

- Tool: FeedbackFruits Self Assessment

- Task: Students evaluated their own first draft against a structured rubric

- Scaffolding: Rubric combined Likert scale, binary, and open-ended questions

- Allocation: Socratic, focused on metacognitive awareness

Group 3 — Traditional TA Feedback (173 students)

- Tool: Written comments by teaching assistants

- Task: 18 TAs each reviewed and commented on student drafts

- Scaffolding: None, TAs had full freedom in style and format

- Allocation: The existing model, used as the control condition

THE PLATFORM

Why FeedbackFruits made this possible

A controlled study at this scale does not run on goodwill and spreadsheets. FeedbackFruits embedded the entire peer review workflow inside Canvas, making the pilot operationally viable without adding a single hour of administrative work for the teaching team.

"The feature I was most satisfied with was the ability to enter our own criteria sections, where we could use a self-developed assessment guide that the students have become familiar with earlier in their studies."

— Marthe Bogen, Administrator, Faculty of Humanities

Automatic allocation: FeedbackFruits distributed 172 students into peer groups instantly, ensuring no student reviewed the same peer twice. Done manually, this would have taken hours in a spreadsheet.

Native Canvas Integration: Submission and review flows ran entirely within Canvas. Students never had to leave their existing environment, reducing friction and increasing completion rates.

Participation analytics: The team could see at a glance who had submitted, who had completed their review, and who needed a nudge, across all 500+ students, in real time.

Flexible deadline management: Per-student deadline extensions were grantable in seconds without disrupting the rest of the cohort. The system handled last-minute group changes instantly.

THE RESULTS

Peer Review produced the highest exam scores

The final exam was taken weeks after the intervention ended, assessed completely independently, and covered the full course. Not just the assignment topic. This is the hardest test of whether a feedback method builds lasting understanding.

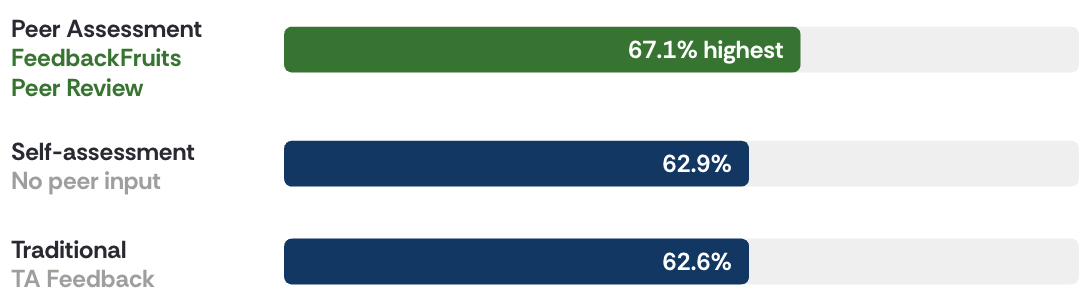

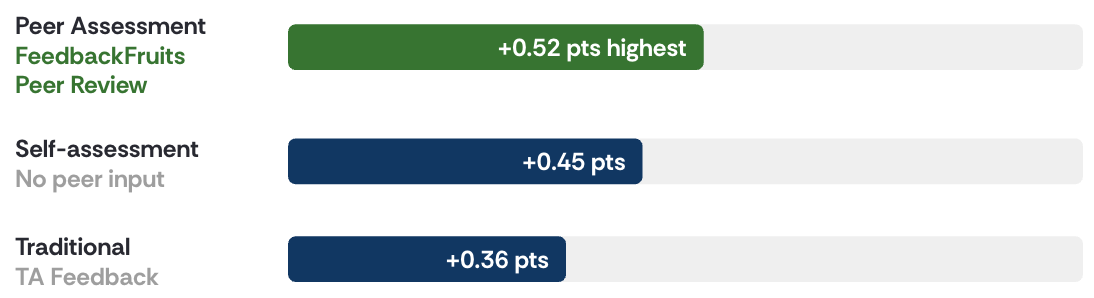

Average Final Exam Score

+4.5% percentage points higher on the final exam: the gap between FeedbackFruits Peer Review and traditional TA feedback. This advantage was measured on a completely separate, independently graded assessment taken weeks after the initial assessment.

Peer Review also produced the highest learning gains

From first draft to final submission, all three groups improved significantly. The question was by how much, and the answer was consistent: FeedbackFruits Peer Review outperformed both alternatives.

Average Learning Gain: First Draft to Final Submission (4-point scale, -1 to 3)

15% greater improvement on the assignment was achieved through FeedbackFruits Peer Review compared to TA feedback, even though no rubrics or guidance were provided to the peer group.

What the numbers mean

- All feedback methods drive meaningful improvement — this is a positive finding across all three groups, however Peer Review proved the best results.

- Peer assessment produced the largest learning gains on the assignment (+0.52 vs +0.45 for TA).

- Peer assessment produced the highest final exam scores (67.1% vs 62.6% for TA) — the most compelling and robust result.

The study used a robust statistical design.

IMPACT

What changed for students and educators

"The system is easy to use and has a clear structure. Once you've been through the process of setting up a peer-review assignment, it's easy to create new tasks…then it's self-explanatory."

— Douwtje Lieuwkje van der Meulen, Course Teacher, Faculty of Humanities

For students

- Reviewing peers' work in FeedbackFruits exposed students to multiple different solution approaches, deepening conceptual understanding beyond their own single attempt.

- The revision process became active and purposeful. FeedbackFruits structured the feedback so students received specific, contextual comments from peers who had just worked through the same problems.

- The 4.5 percentage point exam advantage shows that FeedbackFruits Peer Review builds transferable understanding, not just short-term assignment improvement.

For educators

- FeedbackFruits reduced dependence on TA feedback for every draft. The team scaled formative assessment to 500+ students without scaling headcount.

- More consistent feedback across the full cohort. FeedbackFruits Peer Review is not subject to variation between 18 individual TAs with different styles and standards.

- Full participation visibility through FeedbackFruits analytics. The team knew exactly who had completed each step without any manual tracking.

- Zero additional workload. FeedbackFruits handled allocation, reminders, and deadline management automatically inside Canvas.

CONCLUSION

Scaling feedback without compromising quality

This study shows that scaling feedback does not have to mean accepting lower quality. By redesigning feedback as an active learning process and supporting it with the right structure and technology, the University of Oslo demonstrated that students can learn more effectively from each other than from traditional TA feedback alone.

Through a carefully controlled, statistically validated study with 528 students, the Department of Informatics has built an evidence base for peer assessment that holds up not just on the assignment itself, but on the final exam.

Research originally published in Norwegian by Omid Mirmotahari and Yngvar Berg, Department of Informatics, University of Oslo. Sources: Implementation report, University of Oslo MATNAT (2024). Research paper, Mirmotahari and Berg, NIKT 2024. Study approved by SIKT.

.avif)

.avif)